Back to my homepage.

AffectME: Affective Multimodal Engagement

A project funded by a Marie Curie International Re-Integration Grant: (MIRG-CT-2006-046434) 2007-2009 (2010).

The goal of this project is to contribute to the development of technologies that improve the sense of engagement or immersion of its users (positive usability) by taking into account their

affective states. The proposal focuses on body posture as an indicator of human affective states. A comprehensive framework for the study of affective postural displays is outlined that relies

on a computationally-tractable characterization of emotion in terms of the intensities of its autonomic response, its communicative intent, and the influence of cultural factors.

With the aim to address the current lack of affective posture data in a context, we propose a model-driven and quantitative approach for the acquisition of posture data in three case-studies

that maximize the information that can be obtained in terms of understanding (a) how context affects postural affective displays and (b) what are the parameters that technology can control.

Three case studies are driving this research:

Posture as an indicator of pain in a clinical context. Although pain, as such, is not an emotion, it is associated with a set of negative emotions

(in particular, frustration, anxiety and fear) that will express at the postural level. The particular interest of this study is that the stability of the affective states involved should help clarify the interrelationship

between autonomic response and communicative intent. Indeed, it has been observed that pain behaviour often increases in amplitude in the presence

of solicitous others and/or health professionals.

Posture as an indicator of attention or frustration in a technologically-mediated learning scenario: This scenario offers a good platform for the study

of the interrelationship between the timing of task-related events and the occurrence of mood shifts, and the study of how the valence of emotions affects task performance.

Posture as an indicator of immersion in a gaming scenario: Immersion is traditionally evaluated in terms of subjective reporting. This scenario

provides an ideal platform for assessing the relationship between immersion and autonomic response, and its hypothetical suppression by task-induced attentional load.

A second aim of this project is to propose a robust alternative to the methods currently used for determining the intended signal of affective displays. Multi-modal cross-validation is realized

by complementing the motion capture data with recordings from other modalities such as bio-feedback and eye-tracking, or by studying the perception of synthetic avatars in which the

congruence of different modalities of emotion expression (e.g., facial expressions and body postures) is manipulated.

The final aim of this project is to contribute the design and implementation of a computational model for the contextual recognition of affect from body posture, an essential step toward

designing systems that can recognize, and therefore regulate, the affective states of its users in order to achieve positive usability.

Research student

Collaborators in the Pain study

- Amanda C. de C Williams

Clinical Health Psychology, University College London

- Suzanne Brook

Clinical Specialist Physiotherapist in Pain Management

Pain Management Dept., Queen Mary Wing, The National Hospital for Neurology and Neurosurgery

Th UCLIC Database of Affective Postures and Body Movements

We are creating a database of affective postures and affective body movement. If you are interested in using it for academic purpose, please contact us n.berthouze@ucl.ac.uk or alk@cise.ufl.edu

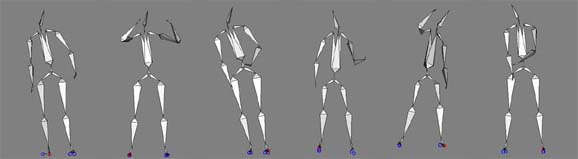

- Acted emotions: angry, fearful, happy, sad. The data have been collected using a VICON motion capture system.

- Non acted affective states in computer game setting: frustration, concentration, triumphant, defeated. The data have been collected using a Gypsy5 (Animazoo UK Ltd.) motion capture system.

- Non acted affective states in clinical setting (not yet available for distribution).

Publications

for a full list of publication click here and follow the publication link

Back to my homepage.